In 2016, during a review meeting at Jaguar Land Rover’s Whitley Engineering Centre, a potential defect surfaced in an infotainment integration that had been under testing for weeks. The issue itself was minor, but the room fell silent. Everyone present understood what an unresolved “minor” defect could become months later embedded in a physical vehicle rolling off a production line in Castle Bromwich, ultimately reaching a customer.

That moment captures a lesson rarely taught in formal education: in complex systems, the cost of ignoring problems is not linear. It compounds, escalates, and eventually impacts the end user often at significant financial and reputational cost.

After spending a decade at Jaguar Land Rover, progressing from Diagnostic Engineer to Head of IVI and Connected Car Validation, Mustasam Akhtar Abbasi led teams of over a hundred engineers, project managers, and test specialists. His work spanned infotainment validation, system-level automation, diagnostics, and fleet management, including contributions to the award-winning Control Touch Pro system one of the most advanced in-vehicle interfaces of its time.

Since co-founding Ziel Global, his focus has shifted to advising organisations on digital transformation, product strategy, and startup mentorship across the UK, Malaysia, and Pakistan. Yet one core insight continues to stand out: much of what defines effective technology leadership at scale was learned while building and validating software for physical vehicles.

This is not a story about cars. It is about how thinking evolves in environments where software failures are tangible, integration complexity is physical, and leadership decisions directly affect systems used by millions.

Scale Is Not a Headcount Problem

In many technology organisations, “scale” is often equated with team size. More people, better tools, stronger middle management. However, experience at Jaguar Land Rover suggests otherwise.

Scale is fundamentally about dependencies.

Within a single programme, dependencies span infotainment teams, integration and testing, electrical engineering, manufacturing plants, supplier ecosystems, and business planning functions. Each operates with its own timelines, definitions of completion, and tolerance for risk.

When a dependency fails, the consequences are not limited to missed sprints—they can delay entire vehicle launches.

At this level, leadership shifts from solving problems directly to designing systems that surface problems early. The value of a leader is no longer measured by individual technical contributions, but by the environment created for others to succeed.

This includes:

- Processes that detect issues early

- Clear escalation pathways without fear

- Review systems that prioritise signal over reassurance

- Acceptance that unresolved problems may surface—and that healthy systems resolve many without leadership intervention

Modern AI teams often struggle with this transition. Small teams can rely on individual brilliance. Scaled systems serving millions cannot. The gap is not technical—it is organisational.

Quality Is a Values Problem Before It Is a Process Problem

In the role of Forward Quality Engineering Manager, quality was never treated as a final checkpoint. It was embedded throughout the engineering lifecycle.

A well-known manufacturing principle illustrates this clearly:

- A defect caught at component level costs 1x to fix

- At integration level: 10x

- After launch: 100x

- In the market: the cost extends beyond money to reputation and legal risk

The critical question was never “Does this pass the test?” but rather, “Does this deserve to pass?”

This distinction requires judgment, context, and a deep understanding of real-world user experience not just compliance with specifications.

The same pattern is visible in AI systems today. Models perform well in controlled benchmarks but fail in production due to bias, edge cases, or untested real-world scenarios. These failures are rarely technical they are cultural.

Responsible AI is, in essence, forward quality engineering applied to machine learning.

Organisations that succeed treat quality as a shared responsibility:

- Engineers challenge flawed requirements

- Data teams question training assumptions early

- Leaders support delaying releases when necessary

This culture is not created through documentation—it is demonstrated through consistent leadership decisions.

Integration Is the Hardest Problem—and the Most Ignored

The development of Control Touch Pro highlighted a critical truth: the hardest challenges were not technical, but organisational.

Multiple teams—programme management, electrical systems, suppliers, and business units operated with legitimate but conflicting priorities. Individually rational decisions often created collective dysfunction at integration points.

Failures at these boundaries tend to emerge quietly. Each team assumes ownership lies elsewhere.

A significant portion of leadership effort was therefore spent on:

- Creating shared understanding across teams

- Making dependencies visible

- Establishing a common language for problem-solving

- Facilitating productive, rather than defensive, conversations

This pattern extends directly to digital transformation efforts.

Failures rarely occur because technology does not work. They occur because:

- Teams cannot agree on ownership

- Dependencies are unclear

- Users experience systems differently than expected

The solution lies not in better tools, but in better integration thinking:

- Build shared language early

- Maintain visible dependency maps

- Design governance systems that expose boundary issues quickly

What Automotive Does Not Prepare Leaders For

Despite its strengths, automotive engineering does not fully prepare leaders for modern digital environments.

1. Pace

Automotive operates on multi-year cycles. Software moves in weeks. Transitioning requires recalibrating risk tolerance—distinguishing between reversible and irreversible decisions.

2. Talent Models

Automotive values deep specialists. Digital environments often require adaptable generalists capable of connecting multiple domains.

3. Decision-Making Under Ambiguity

Automotive systems operate with defined specifications. Many modern technology decisions must be made without complete information, where waiting for certainty carries its own risks.

Experienced engineers often find this transition challenging, as their expertise is rooted in eliminating uncertainty rather than navigating it.

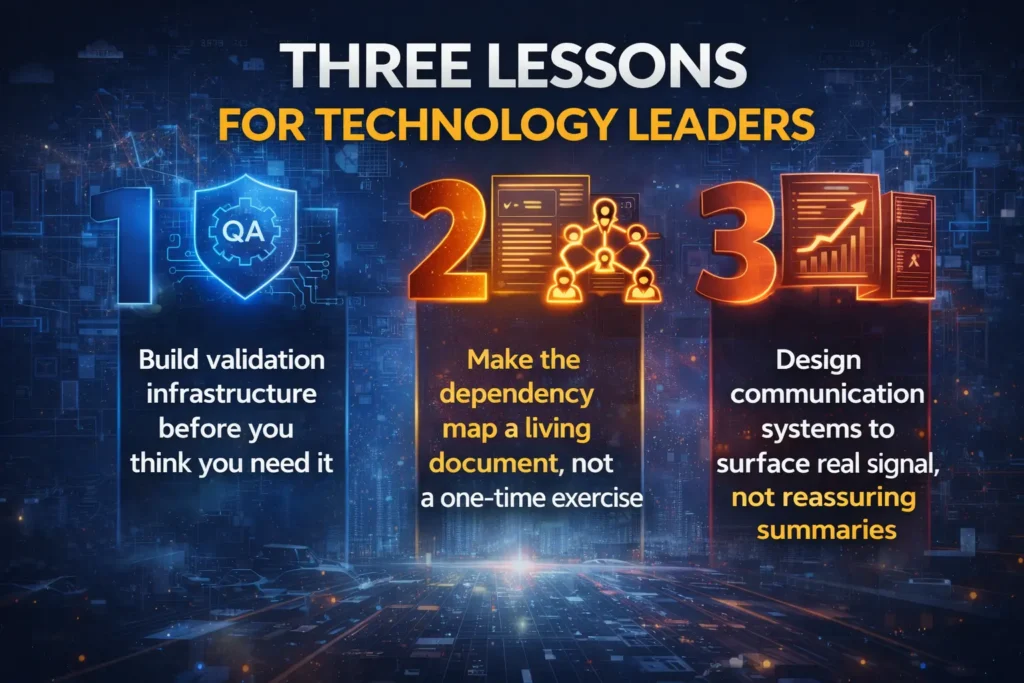

Three Lessons for Technology Leaders

1. Build Validation Infrastructure Early

Reliable validation cannot be built under pressure. Organisations must invest in systems that detect unexpected behaviour before problems arise—not after failures occur.

2. Treat Dependency Mapping as a Living System

Dependencies evolve continuously. Maintaining an up-to-date, visible map ensures risks are identified before they become failures.

3. Design Communication for Truth, Not Comfort

Leaders must receive unfiltered, high-signal information. Systems should encourage transparency, even when the message is complex or inconvenient.

Where This Leads

The most enduring lesson from automotive engineering is not about vehicles it is about systems thinking.

Struggling AI deployments often reveal integration failures. Startups accumulating technical debt expose quality gaps. Delayed transformation programmes highlight unaddressed dependencies.

The tools may change, but the underlying dynamics of complex systems remain constant.

The most effective technology leaders internalise this reality. They:

- Move deliberately, not recklessly

- Build systems to manage uncertainty

- Recognise that the most critical decisions are often organisational, not technical

In many ways, the best AI leaders think like automotive engineers aware of the consequences of failure at scale, and committed to preventing them long before they occur.

The key questions are not technical:

- What failure would be hardest to recover from?

- Which dependencies are assumed but unverified?

- What signals might be filtered without awareness?

These are not questions that require a decade in automotive to ask. They simply require the discipline to ask them early—and the courage to act on the answers.